Google may be helping bad tech happen again — this time on the US border

Google Cloud is a money maker.

It looks like Google is doing it again, and of course, it's all about AI.

The company's Google Cloud hosting program is reportedly facilitating a hare-brained scheme to use AI to catch "mules" and smugglers on the southern U.S. border. While Google's own AI services aren't being used, it's right in the thick of things where all the money is.

Google should know better.

One of the web's longest-running tech columns, Android & Chill is your Saturday discussion of Android, Google, and all things tech.

What's going on here?

I'll start with a short explainer for people who aren't in the U.S. and might wonder what all this fuss is about.

People coming illegally into the U.S. across the southern border is one of the most polarizing issues in the United States. Half the country hates it, and half of those people hate even the legal immigration of people from another culture. The other half knows it's a problem but hates the first half of the people enough to pretend it's not. Yeah, it's stupid, but it is what it is.

With that out of the way, here's what's happening and how Google is reportedly involved. According to The Intercept, U.S. Customs and Border Protection plans to revamp some old surveillance towers around Tucson, Arizona.

They want to install equipment that will use AI to identify every person and vehicle that approaches the border. That's OK, and even my cheap-ass Nest camera can do it. But — there's always a but — they want to use IBM's Maximo inspection software, which is usually put in factory machines to do quality control inspections, to ferret out people with backpacks or otherwise look like they want to do some crimes or something.

Be an expert in 5 minutes

Get the latest news from Android Central, your trusted companion in the world of Android

Google (and Amazon, of course) is allegedly facilitating this by providing hosting services for data streams and tools to train AI. There is surely a lot of money involved, and Google is thirsty for it.

A few years ago, Thomas Kurian, Google Cloud CEO, said the company wasn't going to do anything to help create a "virtual border wall," but that was then, and this is now. Android Central has reached out to Google for a comment on its involvement with the project and will update this article when we hear back.

But let's be clear: Google is not providing any of its own AI tools to make any of this happen, so even if it is involved, it's not directly being evil. It's just profiting from it.

Google knows better

You might be asking, "Jerry, why is protecting the border something evil?" It's not. It's 100% definitely not evil in any way, shape, or form. A country needs to be able to control how people come in and out and what they carry through. I'm not part of the half who hate the other half enough to hide my head in the sand.

But there is a right way and a wrong way. First, you can't dehumanize people who just want a better life. This is the biggest point of contention among the U.S. populace, and you have to admit the current political administration has said and done some pretty dehumanizing things.

Doing anything to help here is a bad PR move for any company, let alone one the size of Google. The optics of this are terrible, and most of the people working for Google and using its products aren't going to like it. Best of all, internet tech websites will make sure everyone knows about it; it's what we do best.

It's probably not going to work, because AI

The other issue is that Google knows this isn't going to work but is probably still salivating to get involved and collect a huge amount of taxpayer money for hosting services. Sure, it doesn't have to be responsible for the problems and directly do evil shit, but it gets to sit back and watch, pass Go, and collect their money.

AI can be trained to find people wearing backpacks. That's probably simple to do; tedious and time-consuming, but training an AI for this is possible right now. Then what?

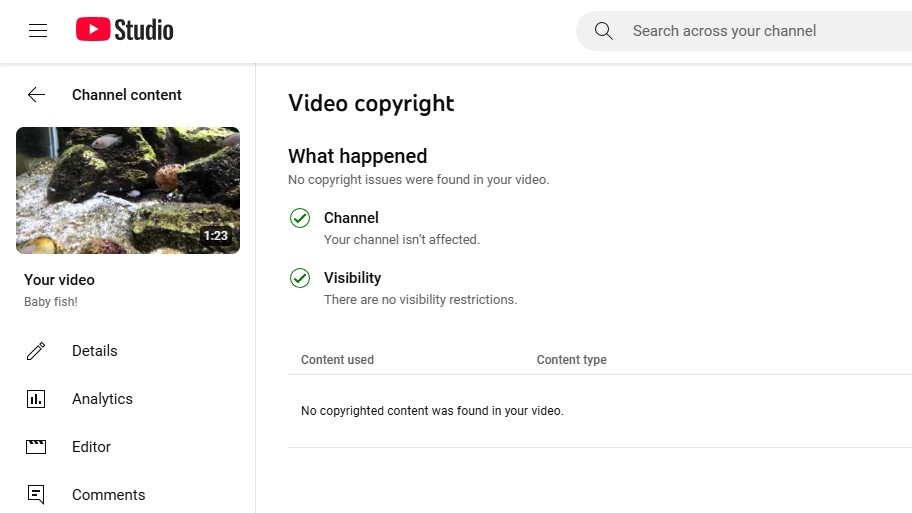

You aren't naive enough to think some bad stuff isn't going to happen to anyone targeted by an AI super-robo-border-camera. Google definitely isn't. Google uses AI to monitor YouTube (along with other things), and even that is a bona fide mess that Google can't control. If AI can't learn to spot what is copyright protected and what isn't, it's not good enough to do anything to a human being.

The best thing that could happen is that a truck filled with real human agents intercept the target. I won't speculate on the worst-case scenario. Still, bad guy will be caught. Innocent people will also be caught.

I wouldn't go hunting or backpacking around Tucson if I were you.

Assuming Google really is involved, it isn't really in the wrong and is simply fulfilling another government cloud hosting contract. It's just one that's tied to a deeply politically dividing issue, and the company should know better.

It would be the same if Google were to develop an online repository to help register and track every gun in America: half the country would go crazy, and the other half would say it's great. It's not great — it's stupid, and there are plenty of different ways to get that government money.

Jerry is an amateur woodworker and struggling shade tree mechanic. There's nothing he can't take apart, but many things he can't reassemble. You'll find him writing and speaking his loud opinion on Android Central and occasionally on Threads.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.